In 1822, Charles Babbage conceptualized and began developing the Difference Engine, which is believed to be the first automatic computing machine that could approximate polynomials. The Difference Engine was able to calculate several sets of numbers and produce hard copies of the results. Babbage received some help developing the Difference Engine from Ada Lovelace, who was considered the first computer programmer for her work. Unfortunately, due to finances, Babbage was never able to complete a fully working version of this machine. In June 1991, the London Science Museum completed Difference Engine No. 2 for the bicentenary of Babbage’s birth and later completed the printing mechanism in 2000.

When was the first computer invented?

The first mechanical computer, created by Charles Babbage in 1822, is nothing like what most would consider a computer today. Therefore, this page provides a list of all computer firsts, starting with the Difference Engine and leading to the computers we use today.

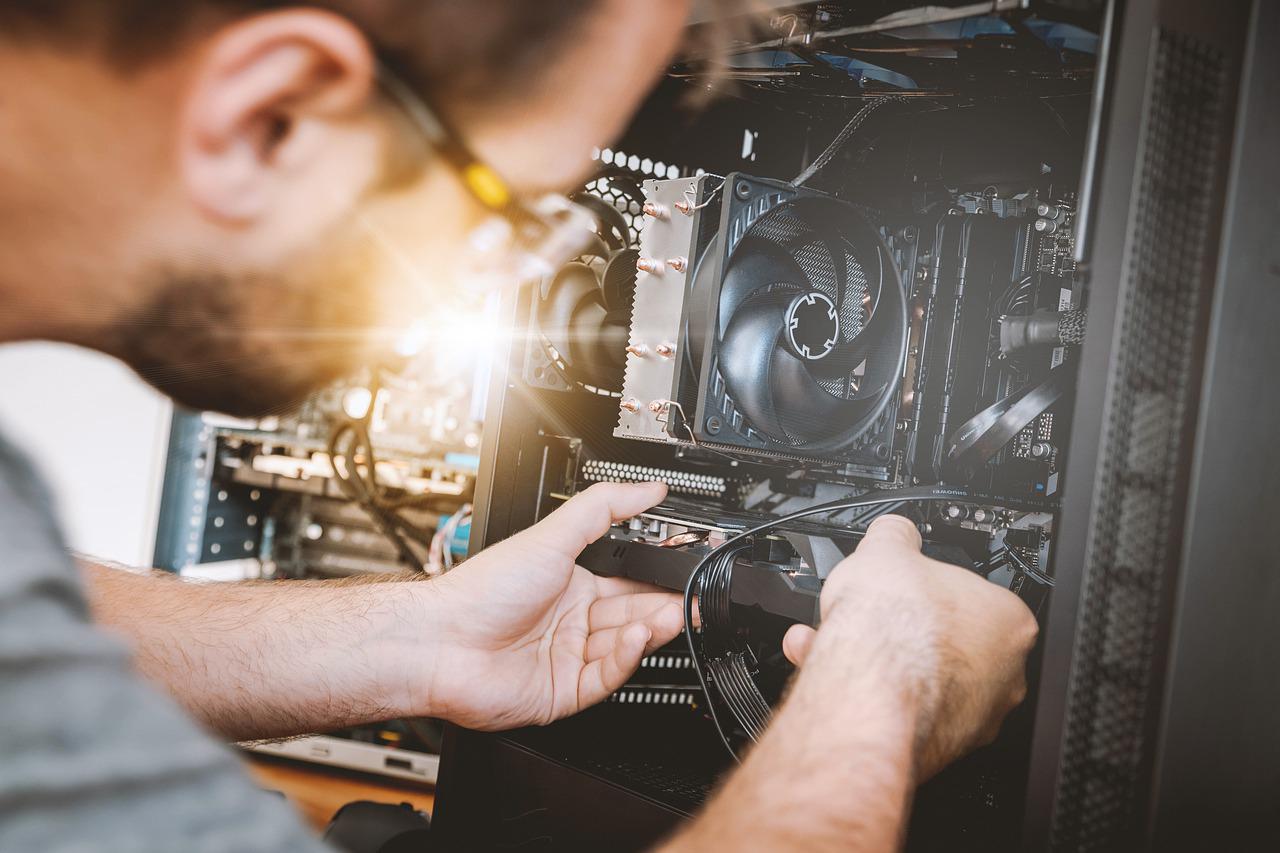

Type of computer to build and parts needed

Before you can build your computer, you need to figure out your computing needs. What is your use for the computer? Do you play games, edit pictures or videos, mix audio, surf the Internet, create documents or spreadsheets, or check e-mails? Knowing what you want to do with your computer will help you determine which hardware components you need and what type of computer you will build. For example:

Gaming or Graphics PC – Playing games, editing images, or creating and editing videos requires a more powerful graphics card for better graphics processing performance. In addition, a larger hard drive and more memory are often needed.

Audio Editing Computer – Creating, editing and mixing audio usually requires a high-quality sound card. A larger hard drive is useful, and additional memory can be useful for processing high-quality audio files.

A computer for Documents and Surfing – Everyday tasks such as creating documents and spreadsheets, reading and writing e-mails, and surfing the Internet require a less powerful computer. Your computer doesn’t need a powerful graphics or sound card, a large hard drive, or more RAM for these tasks.

A generation of computers

Computer generations are based on when significant technological changes occurred in computers, such as the use of tubes, transistors, and microprocessors. As of 2020, there are five generations of computers.

Go through each of the generations below for more information and examples of computers and technologies that fall into each generation.

Modern phones and tablets are so diverse in terms of functionality and options that Internet users can easily download various applications to play casino online NetBet games on their phones.

Generation of Computer

First generation (1940 – 1956)

The first generation of computers used vacuum tubes as the main part of the technology. Tubes were widely used in computers from 1940 to 1956. Tubes were larger components and resulted in first-generation computers being quite large and taking up a lot of room. Some of the first-generation computers took up an entire room.

The ENIAC is a great example of a first-generation computer. It consisted of nearly 20,000 tubes, 10,000 capacitors and 70,000 resistors. It weighed over 30 tons and took up a lot of space, requiring a large room to house it. Other examples of first-generation computers include the EDSAC, the IBM 701, and the Manchester Mark 1.

Second generation (1956 – 1963)

The second generation of computers saw the use of transistors instead of tubes. Transistors were widely used in computers from 1956 to 1963. Transistors were smaller than tubes and allowed computers to be smaller, faster, and cheaper to build.

The first computer that used transistors was the TX-0 which was introduced in 1956. Other computers that used transistors include the IBM 7070, the Philco Transac S-1000, and the RCA 501.

Third generation (1964 – 1971)

The third generation of computers introduced the use of ICs (integrated circuits) in computers. The use of integrated circuits in computers helped reduce the size of computers even more than second-generation computers and also made them faster.

Almost all computers from the mid to late 1960s used integrated circuits. While the third generation is considered by many to be the generation that lasted from 1964 to 1971, integrated circuits are still used in computers today. More than 45 years later, today’s computers have deep roots going back to the third generation.

Fourth generation (1971 – 2010)

The fourth generation of computers took advantage of the invention of the microprocessor, more commonly known as the CPU. Microprocessors with integrated circuits made it possible for computers to fit easily on the desk and at the launch of the laptop.

Some of the first computers to use a microprocessor include the Altair 8800, the IBM 5100, and the Micral. Today’s computers still use a microprocessor, although the fourth generation is believed to have ended in 2010.

Fifth generation (2010–present)

The fifth generation of computers is starting to use AI (artificial intelligence), an exciting technology with many potential applications around the world. Artificial intelligence technology and computers have made leaps and bounds, but there is still room for improvement.

One of the most famous examples of artificial intelligence in computers is IBM’s Watson, which was featured as a contestant on the TV show Jeopardy. Other better-known examples include Apple’s Siri on the iPhone and Microsoft’s Cortana on Windows 8 and Windows 10 PCs. Google’s search engine also uses AI to process user searches.

Sixth Generation (Future Generation)

As of 2021, most still consider us to be the fifth generation as AI continues to evolve. One possible candidate for the future sixth generation is the quantum computer. However, until quantum computing is developed and widely used, it is still only a promising idea.

Some people also consider nanotechnology to be part of the sixth generation. Like quantum computing, nanotechnology is still largely in its infancy and requires further development before it can be widely used.