Back in 2019, Microsoft and OpenAI teamed up with a goal to create specialized supercomputing resources that would enable OpenAI to train an ever-expanding collection of advanced AI models. OpenAI required a cloud-computing infrastructure that was unlike anything ever attempted in the industry.

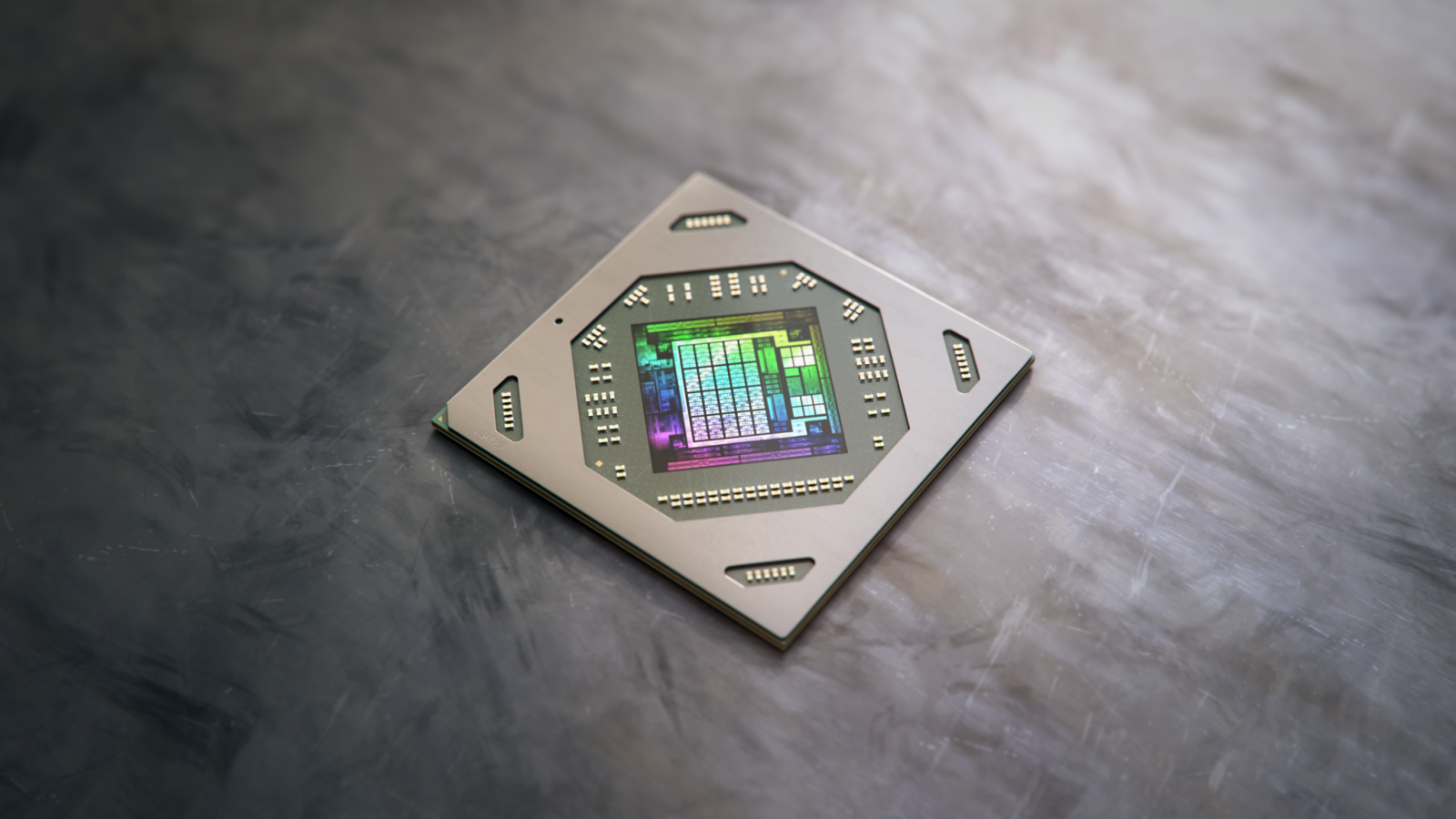

As time has passed, the partnership has grown stronger, and just recently, on March 13, 2023, Microsoft announced the release of new, high-powered, and easily expandable virtual machines. These machines are equipped with the latest NVIDIA H100 Tensor Core GPUs and NVIDIA Quantum-2 InfiniBand networking. These upgrades are part of an ongoing effort to address the enormous challenge of scaling up OpenAI’s AI model training capabilities.

“Co-designing supercomputers with Azure has been crucial for scaling our demanding AI training needs, making our research and alignment work on systems like ChatGPT possible,” said Greg Brockman, President and Co-Founder of OpenAI.

Microsoft has launched the ND H100 v5 Virtual Machine (VM), which allows customers to scale up their computing resources as needed, with the ability to use anywhere from eight to thousands of NVIDIA H100 GPUs. These GPUs are linked together with NVIDIA Quantum-2 InfiniBand networking, which Microsoft claims will result in much faster processing speeds for AI models compared to the previous generation.

Nidhi Chappell, who is the Head of Product for Azure High-Performance Computing at Microsoft, has revealed that their recent breakthroughs were achieved by figuring out how to construct, run, and maintain tens of thousands of GPUs located together and connected via a high-speed InfiniBand network with minimal delay. According to Chappell, this was a challenging feat because it had never been attempted before by the companies that supply the GPUs and networking equipment. Essentially, she said that they were venturing into uncharted territory and it wasn’t certain whether the hardware could handle being pushed to its limits without failing.

Furthermore, Chappell mentioned that achieving the best possible performance requires a significant amount of optimization at the system level. This optimization involves using software that maximizes the effectiveness of both the GPUs and the network equipment. At present, the Azure infrastructure has been optimized specifically for training large language models and can be accessed via Azure’s cloud-based AI supercomputing capabilities.

Microsoft claims that they are the only provider of the required GPUs, InfiniBand networking, and the distinct AI infrastructure necessary to construct transformative AI models on a large scale, which is only available on Microsoft Azure.

Via Microsoft