The extraction and transmission of data from a website onto one’s computer or on another website can be a time taking and tedious process. However, web scraping has resolved this issue and is a widely used technique for some time now. Web scraping (data extraction, web harvesting, or screen scraping) aids in extracting large amounts of data from various websites by converting and saving them into a more user-friendly format easily supported and processed by local computers.

Earlier, this process was done manually, but now it is just a matter of a few clicks with a web scraping website and the advancements in technology. There are in fact scraping websites that provide software and free scraper tools of various kinds and perform this process in a comparatively cheaper and faster way with error-free performance. To scrape webpages has become quite popular among industries and brands, be it finance, e-commerce, or marketing. The vast majority of them utilize web scraping to extract data efficiently and effectively.

Why Web Scraping?

Web scraping tools (1) crawl the website more reliably, (2) filtering out the content from a website based upon your geographical location, (3) save yourself from IP address bans that some sites have applied, and (4) to send high amounts of requests to the target website, proxy (3rd party server) scraping comes in handy that lets you scrape the website anonymously.

Furthermore, depending upon the structure of the contents of the websites, scraping can be performed via two methods; (1) the data from the websites with HTML front ends can be easily extracted by applying the code, and (2) websites with API format require the simulation of the request (as each time the user visits, the website queries). Both the methods have their pros and cons, but the latter is more preferred as it provides more structured data while the former is easy and simple but requires a new code every time the websites change its front end.

Freely Available Scraping Tools

Web scraping can be written via different programming languages (Java, Python, Node, or Ruby). Still, it requires highly professional programmers; however, some software companies have developed tools that make scraping techniques easy for the general public. These tools are known as web scraper tools, web extractors, web harvesting, or crawling tools. Few of these tools that are efficient in their performance and are commonly used are as follows;

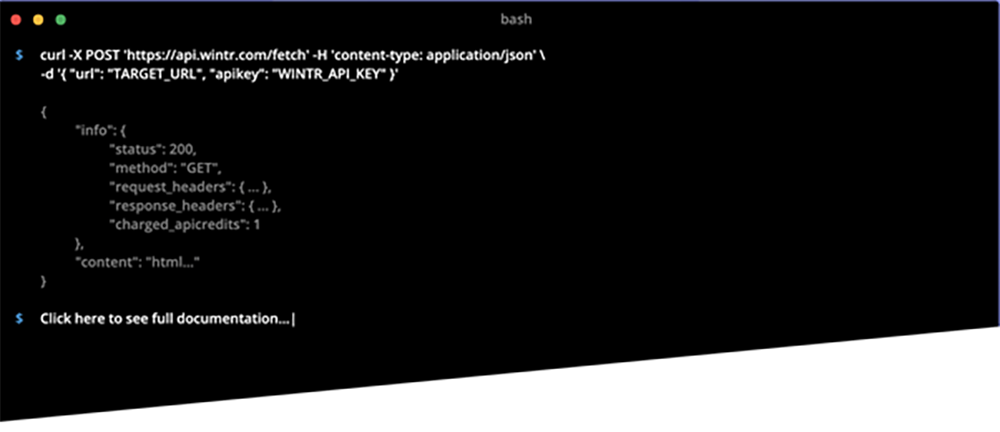

- Scrapper API

Generally, developers building web scrapers utilize this tool as it provides unprecedented speed, reliability and customization. It allows you to access the raw HTML with a simple API call as it handles CAPTCHAs, proxies and browsers very well and render JavaScript.

- Octoparse

It is the most commonly used scrapper tool as it does not require a code to extract data. It can easily be configured because of its point and click interface. It allows exporting data in any format (TXT, CSV, HTML, Excel formats). Moreover, it comes with a free tier where the user can build up to 10 free crawlers.

- ParseHub

ParseHub is a free, strong scraper tool as it allows you to extract data in any format without any coding. From data scientists to analysts to journalists, people from all walks of life use this tool freely. Moreover, it aids in automatically storing the data on the servers.

- Scrapy

One of the most common and popular Python libraries for years, Scrapy, is an open-source tool. It is rapid, simple and extensible to use. Moreover, It handles all the plumbing (proxies, queuing requests) efficiently, making it very helpful and reliable for data mining, monitoring and automatic testing.

- BeautifulSoup

It is an extremely well-documented Python parser as it can easily parse any HTML or XML data into a readable format without any complexities. It is very well documented and can help you retrieve the information from data much faster.

Conclusion

Web scraping has its advantages, as it has automated the extraction of data, making it a less tiring and more fun job. But, it must be used responsibly as its misuse can lead to some unnecessary issues.