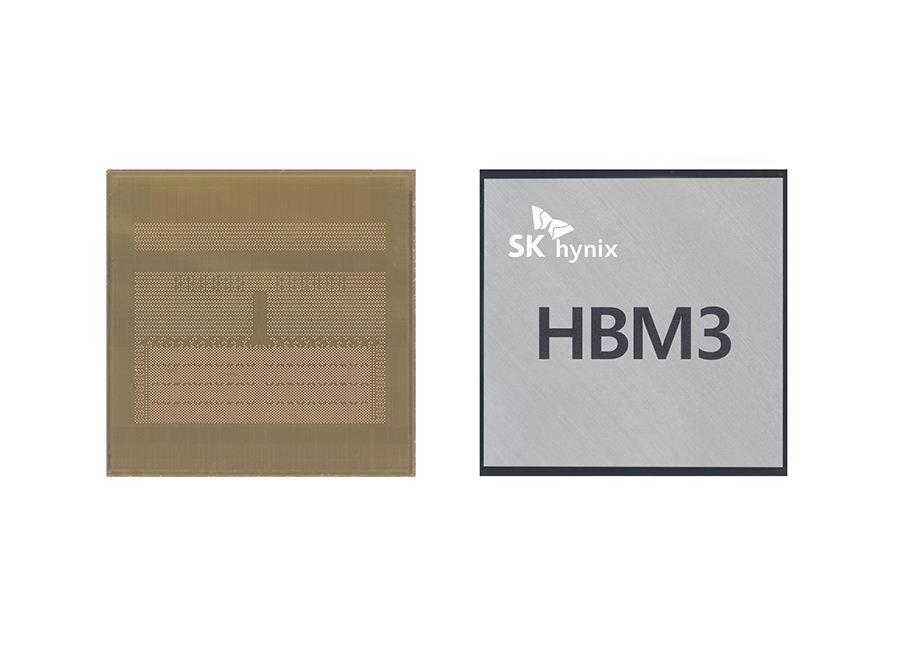

SK Hynix just verified that they have manufactured 24GB HBM3 memory with up to 819 GB/s bandwidth. HBM3 has developed it as the next-gen memory for high-performance computing products like GPUs and possibly CPUs. It will reportedly require more capacity and higher bandwidth.

JEDEC has not yet published the final specifications of the new standard. JEDEC is the body responsible for HBM3. Hynix has updated the specs from 5.2 Gbps to 6.4 Gbps. However, they have not confirmed which specifications are similar to whatever the company intends to manufacture for next-gen accelerators.

The module already shows 12 stacks each attached to a 1024-bit interface. While controller bus width has not been altered since HBM2, a greater number of stacks in addition to higher frequency increases the bandwidth per stack from 461 GB/s to 819 GB/s.

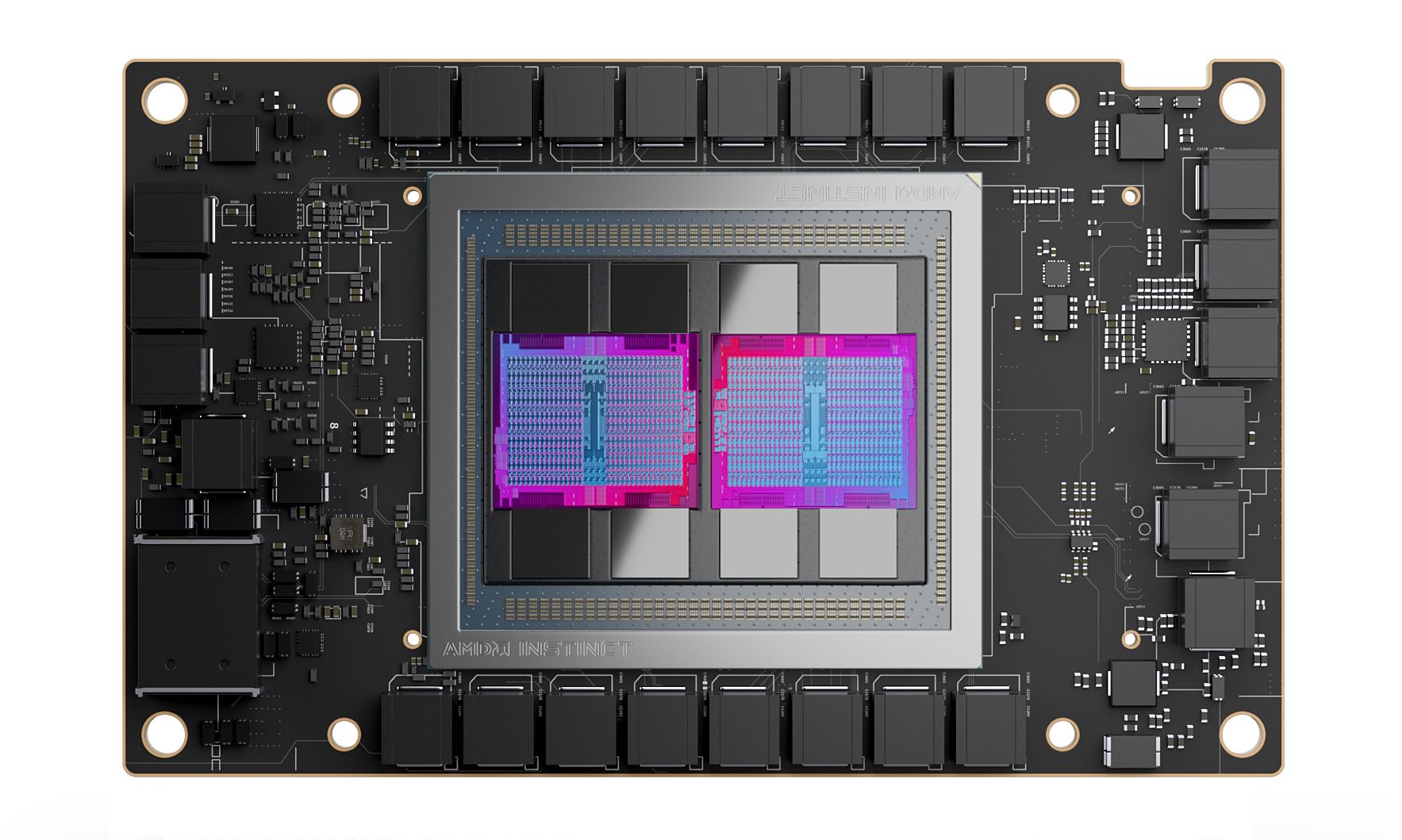

This Monday, the AMD Instinct MI250X accelerator was announced. It offers 8 HBM2e stacks clocked at 3.2 Gbps. Each of them features 16GB of capacity, thus the whole device gives 128 GB. Meanwhile, TSMC, on the other hand, has already disclosed its plans for CoWoS-S (Chip-on-Wafer-on-Substrate) packaging technology. It will feature up to 12 HBM stacks. It is expected that the first products featuring this technology will be launched in 2023.

By the time such products come into the market, the HBM3 should be readily available. So, theoretically, a memory featuring twelve 12Hi HBM3 stacks from SK Hynix will be giving 288 GB of capacity and up to 9.8 TB/s of maximum bandwidth.

https://twitter.com/aschilling/status/1458820109523369992